1. Introduction

For many companies, the introduction of AI is at the top of the strategic agenda. But actual added value from AI often fails in practice due to access to the right data and its quality.

In order to make data usable for AI applications, it must meet certain requirements: It needs high data quality, a clear semantic structure and must be understandable and relevant in the appropriate business context. This so-called AIReadiness is a challenge — especially when data from SAP systems is to be used.

Until now, access to SAP data for data science and AI platforms was only possible via complex replication mechanisms. This led to redundancies, delays, and data governance issues.

With the SAP Business Data Cloud (BDC) SAP now wants to fundamentally address this problem: through an open, semantically consistent and AI-enabled data platform.

We have taken a detailed look at SAP BDC and below provide a practical assessment of its added value, technical options and typical deployment scenarios.

2. SAP Business Data Cloud

What is the Business Data Cloud?

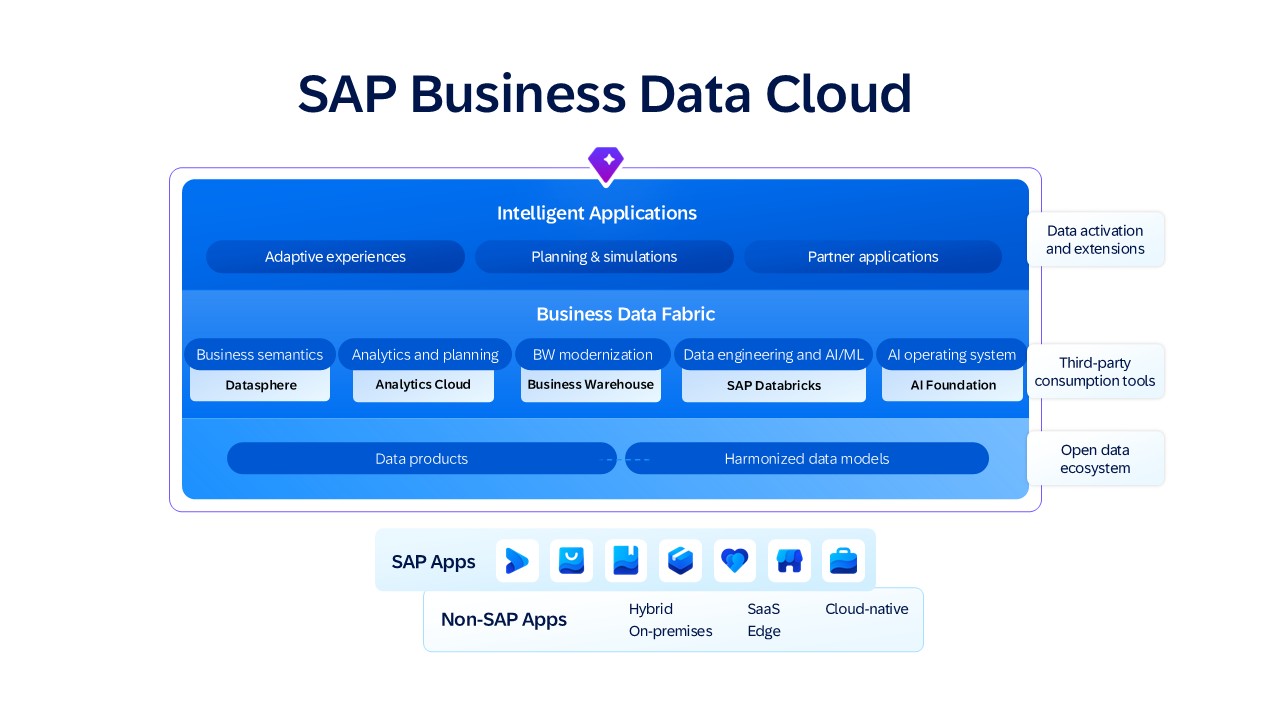

SAP Business Data Cloud (BDC) is a new, cloud-native data platform from SAP, provided on the SAP Business Technology Platform (BTP). As a software-as-a-service (SaaS) solution, it is intended to standardize and manage SAP and non-SAP data and make it usable for modern use cases. The BDC thus combines existing SAP solutions such as Datasphere, Analytics Cloud and BW and expands them with new AI functions, including through the native integration of Databricks.

Key Features and Architectural Principles

A core principle of the BDC is central data integration across system boundaries: It integrates data from SAP and non-SAP systems and makes it available as standardized data products while maintaining the business context. In doing so, the BDC follows the principle of Data sharing instead of Data Replication — i.e. access to data at

the source instead of redundant copies. This prevents redundancies and ensures SAP governance. The SAP Datasphere provides central modeling, access control and role management, so that data and access loyalty are guaranteed. SAP also provides ready-to-use applications (“intelligent applications”) that can contain AI-based analyses, planning and metrics and can be used via the BDC cockpit.

The concept of data as a product is also new: Data objects receive meta information such as semantics, description, quality requirements and approval processes. This is an important step forward in terms of data mesh and data governance, as it provides maintained and clearly defined data packages.

With SAP Databricks, BDC now offers its own platform for the independent development and provision of business-specific AI solutions.

Openness and integration

SAP BDC follows a multi-cloud approach and can be operated on AWS, Google Cloud and Azure. It also offers a variety of standardized connectors and open interfaces for connecting non-SAP systems.

The aim is to combine SAP and non-SAP data in a common, semantically enriched platform and thus create a central access point to the company's wealth of data. SAP explicitly follows an open data ecosystem approach, which largely dispenses with data copies and avoids lock-in. Business users and data scientists now access the same semantically harmonized database — with appropriate tools and roles.

Data scientists in particular are now benefiting from zero-copy integration with SAP Databricks: For AI/analytics use cases, data no longer needs to be exported from SAP systems. Instead, the data can be read directly from the source system. (More on this in chapter 3.)

Governance, Security, and Control

The BDC is managed centrally via the BDC cockpit. Data products and models can be defined and managed here. Access rights and roles can also be assigned. Data sources and flows can also be traced.

Identity and authorization management is also centrally controlled here in order to manage data and access rights across various platforms in a uniform and compliant manner. In addition, the BDC provides logging functions for data access, changes, and movements. This is an important aspect for audits, audit security and GDPR-compliant data use.

3. SAP Databricks

Introducing Databricks

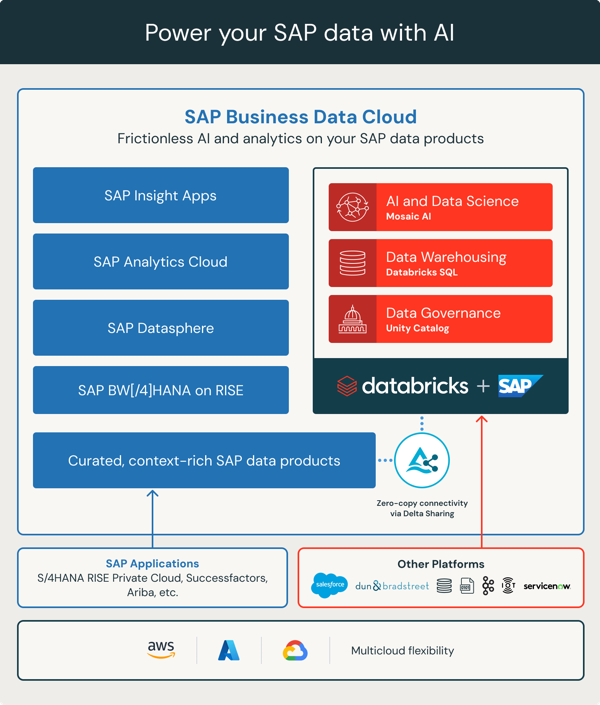

SAP Databricks is the new integration of the Databricks Data Intelligence Platform into the Business Data Cloud, managed by SAP. Databricks is a cloud-based platform for data engineering, data science, analytics, and AI.

It is based on the data lakehouse architecture model, which combines the flexibility of data lakes with the performance of data warehouses. It allows structured, semi-structured, and unstructured data to be stored and processed in one place. The data stored there can be used directly analytically without complex ETL processes.

Thanks to cloud technology, resources can be scaled as needed. The Data Lakehouse also offers options for data governance, access control, and cataloging of data assets via the Unity Catalog.

Databricks uses the open standard Delta Sharing to share data across platforms. The data therefore remains at the source and can be processed by various authorized consumers. This process is also known as zero-copy sharing.

Databricks is specifically designed to process and analyze large amounts of data and develop AI models. It is based on Apache Spark, which allows fast and distributed processing of large amounts of data. Spark can also be used to develop efficient ETL pipelines for various data sources. Databricks also provides the complete end-to-end workflow for developing and operating AI models. This is supported by MLflow, an established tool for managing the entire machine learning life cycle. In particular, this makes model monitoring and versioning easier.

SAP Databricks: Special features of integration into Business Data Cloud

Thanks to the native integration of Databricks into SAP BDC, data experts benefit from zero-copy access to business data, which speeds up analyses enormously and avoids lengthy and complex data exports.

Another advantage of native integration is that ready-made SAP data products can be used. This preserves business semantics and metadata from SAP and allows analyses to take place directly in a business context. Data products largely eliminate the need for ETL processes, as the data products are maintained and provided centrally. However, this standardization also creates restrictions on individual data modeling compared to the independent Databricks setup.

External data sources (e.g. interfaces, legacy systems, public data) can also be integrated via delta sharing or connectors to enrich SAP core data.

The integrated Databricks is ideal for companies that want to build on their existing SAP data and are looking for a quick start in AI. It is also ideal when companies place high demands on compliance and governance and want to make as little integration effort as possible.

For more sophisticated data engineering scenarios, e.g. with a wide variety of data sources, non-SAP centricity or complex transformation logics, it is still possible to use an independent Databricks cluster.

4. Conclusion

With the Business Data Cloud, SAP is creating a modern data architecture that does not replace existing systems such as Datasphere, Analytics Cloud and BW, but integrates and expands them. Existing investments are therefore protected and can be gradually migrated to a future-proof AI-enabled data platform.

The Business Data Cloud is based on three major innovations: The consistent, semantically enriched data model has now created a stable basis for analysis, planning and AI. Zero-copy access to business data prevents redundant data storage and significantly speeds up analyses while maintaining data governance. With the new SAP Databricks, it is also possible for the first time to develop AI models and complex data processing directly on SAP data.

With the Business Data Cloud, SAP has solved significant technical shackles. However, companies must take the last step towards business AI themselves.